Improving the performance of the GPT-4-powered chatbot by 1900% with Pinecone, LangChain, and embeddings

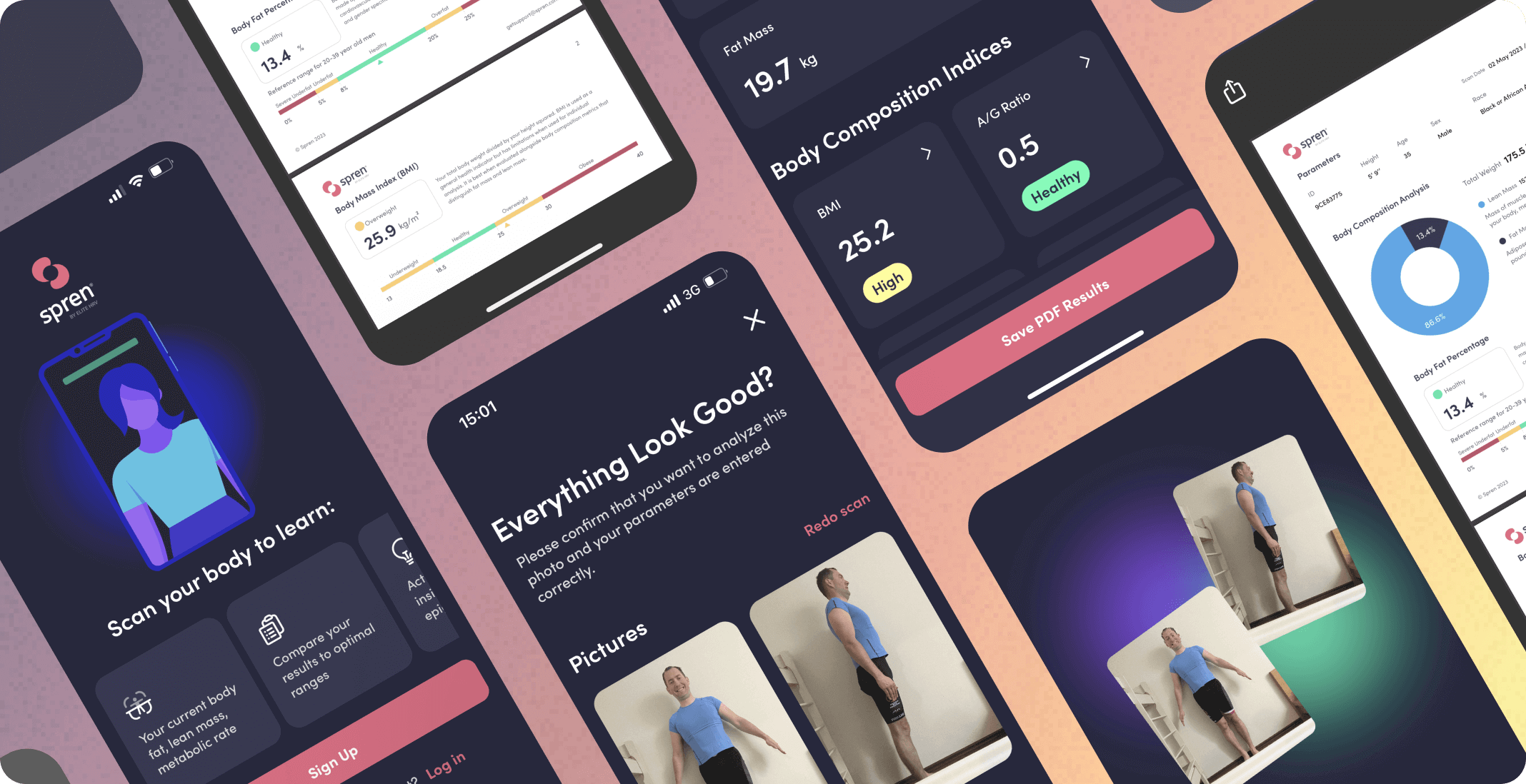

Spren

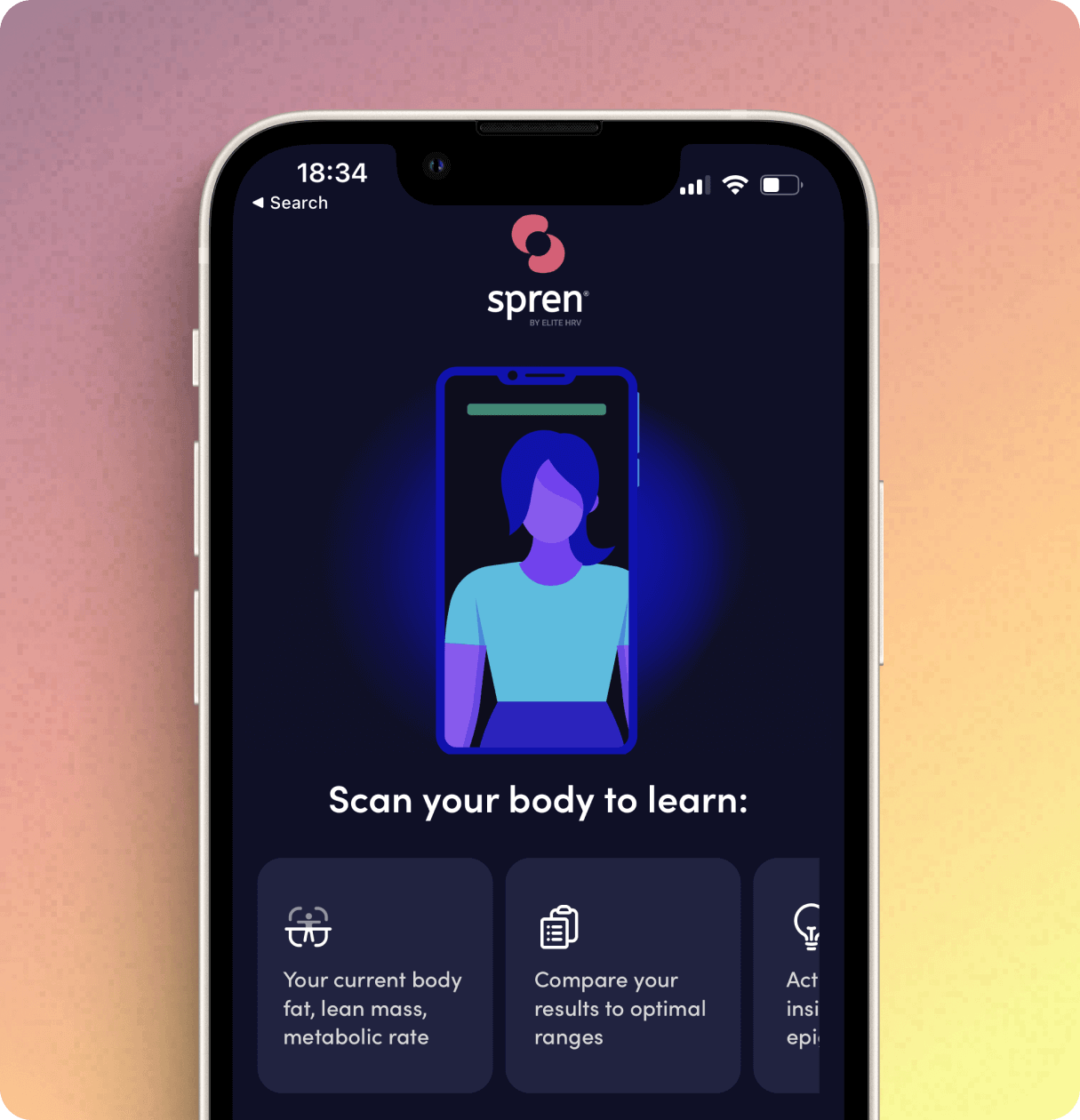

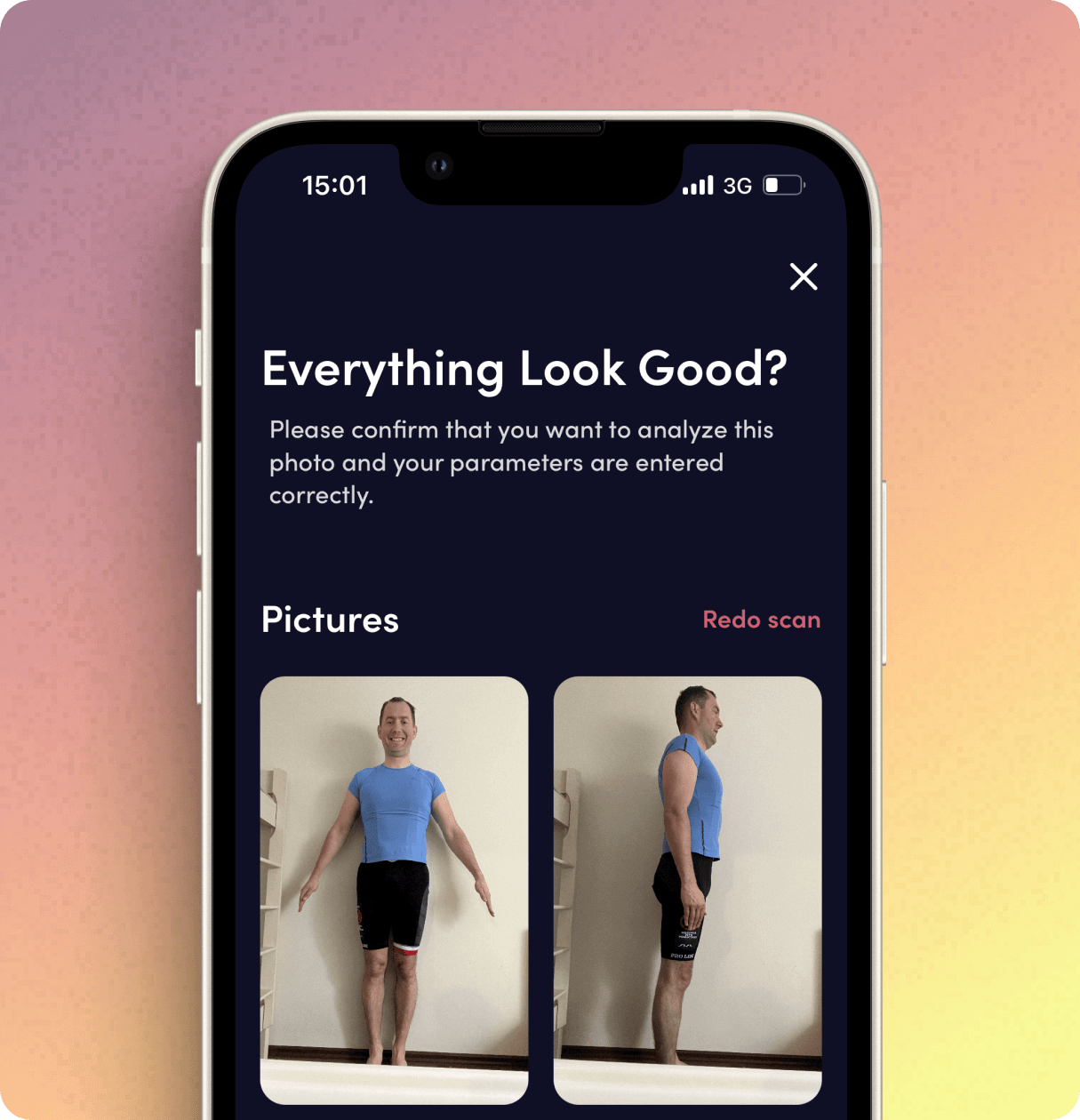

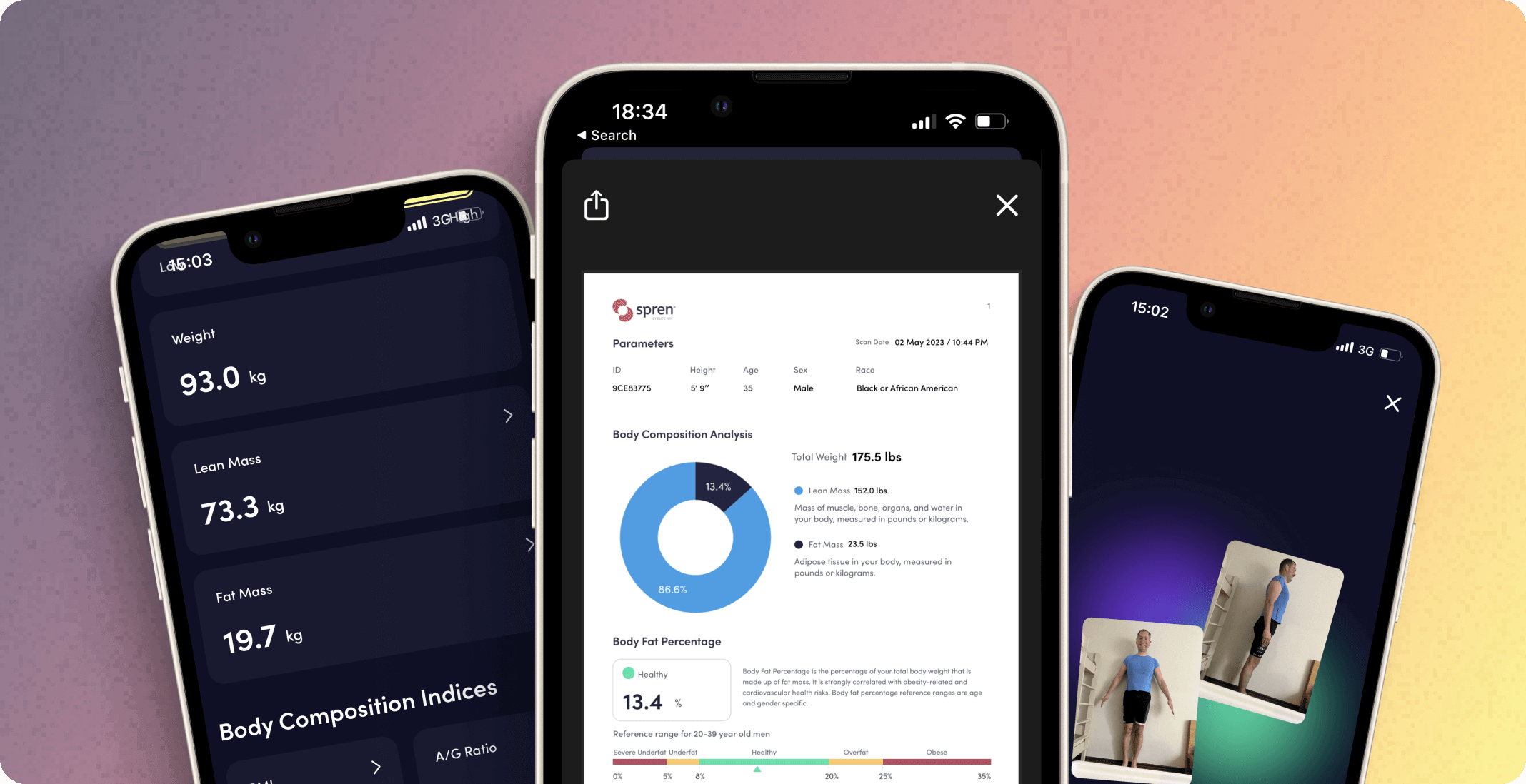

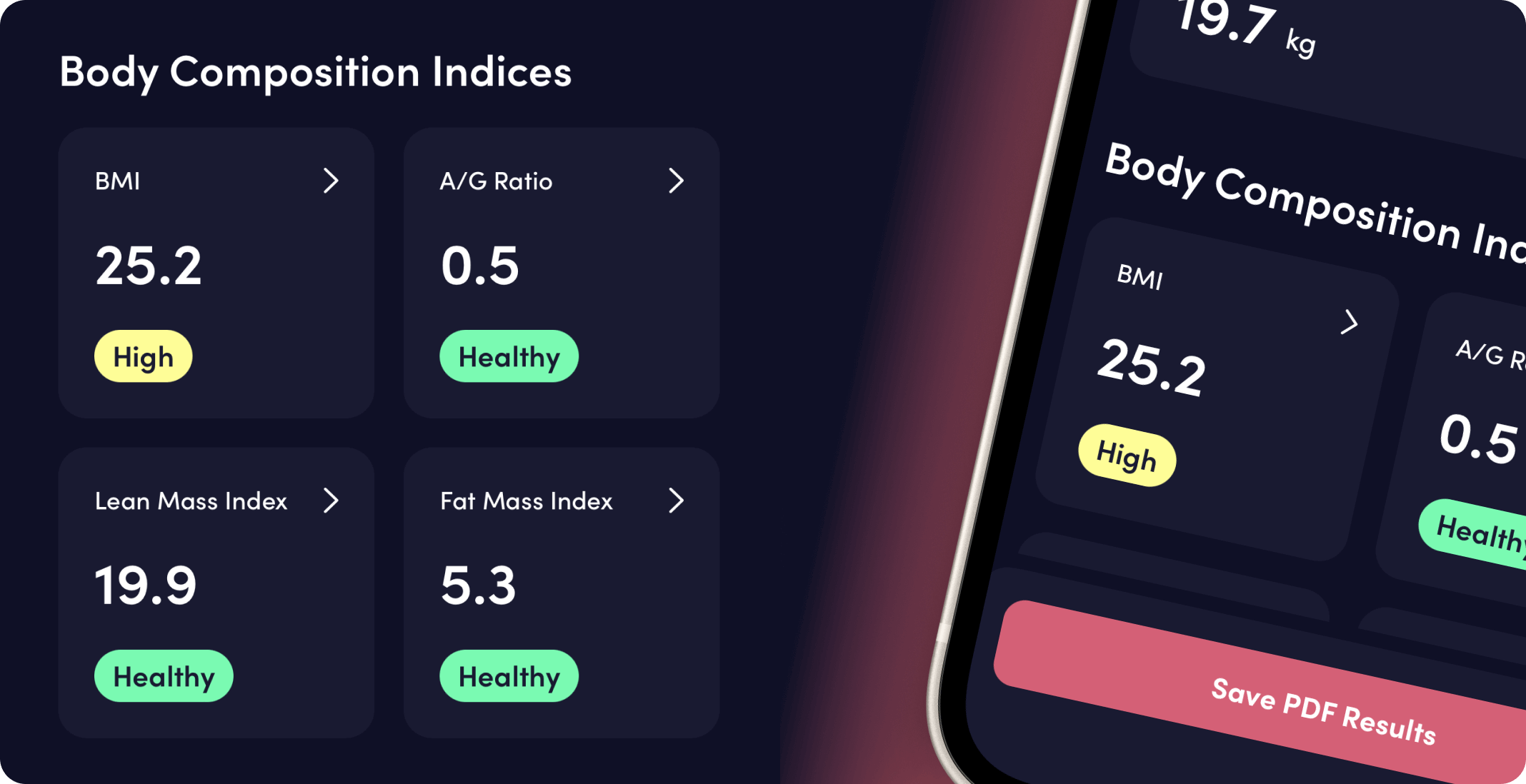

Spren is a bleeding-edge fitness tech startup from North Carolina backed by investors such as Boston Seed Capital and Drive by DraftKings. Their platform uses computer vision and machine learning algorithms to turn users’ smartphone cameras into powerful body composition scanners. By measuring body fat percentage, lean mass, and other parameters, it can provide users with deep insights into their body fat composition, lean mass, and cardio-metabolic health. And, what’s most important, turn that data into actionable insights that can help users optimize their health, performance, and longevity.

Project Overview

Spren saw potential in utilizing generative AI to provide users with recommendations and answers to their questions and increase user engagement. They had an idea to integrate the GPT model into their chat. The model would then combine user context with an industry-specific knowledge base to provide users with reliable answers and recommendations.

Our tasks

GPT Integration

A generative AI chatbot was meant to be implemented into an existing iOS mobile app. It was necessary to connect the internal database, GPT (APIs, agents), and the application’s front-end.

GPT Optimization

To achieve a satisfying level of performance and accuracy, it was necessary to run several experiments, testing different prompts and model parameters. The goal was to shorten the response time to a minimum.

Back-end Optimization

At the same time, it was important to implement several architectural and infrastructural backend solutions inside the app that would handle the chatbot in the most efficient way without causing any delays on the user’s side.

Goals

Increasing user engagement

Through hyper-personalized recommendations, Spren aimed to increase user engagement in the existing app. The engagement was measured with time spent in the app and the frequency of its usage.

Making data more understandable

The idea underlying the project was to help users make use of their performance data. To do so, Spen wanted to present that data in a conversational form through a virtual assistant that would help users understand metrics and provide personalized recommendations.

Improving the app’s performance

Initially, the model required as much as 40 seconds to process the requests and provide users with a response. The goal was to shorten this time as much as possible to avoid friction and frustrations on the users’ side.

The Neoteric team were excellent as a staff-augment to our engineering team. The leadership group took the time to understand our needs and recommend the right augment skillsets. Their engineers work hard and fast, always keeping us on our toes! We’re pleased with the combined end product and look forward to working with the Neoteric team again.

Challenges

Long time to response

In the proof of concept, the chatbot needed ~40 seconds to process every user request, send it to GPT API, and return the answer. With such a long response time, there was a high risk that users would not use the feature.

Unstructured data

The knowledge base used by the model came from different sources, such as articles and video transcripts. The texts were of different lengths and written in different styles and tones – yet, they all served as a knowledge base for the model.

GPT hallucinating

When it comes to users’ health and well-being, the accuracy of the answers and recommendations is of the highest priority. That’s why we needed to find a way to reduce the amount of the model’s hallucinations to the minimum.

Our approach

Testing different models

While GPT-4-32K is the most powerful of the OpenAI LLMs, it doesn’t mean that it’s the best one for every possible use case. Considering the accuracy and cost-efficiency, we used GPT-4 for the large-context requests and text generation and GPT-3.5 for the less complex tasks, such as user context extraction.

Increasing model performance by using embeddings

Every input we had – user queries, user context, knowledge base – was changed into vectors (embeddings) stored in the JSON files. Additionally, the knowledge base content was stored in a Pinecone vector database. It increased the solution’s performance and made it possible to introduce a relevance score to improve its accuracy.

Filtering the knowledge base with a relevance score

To match the right piece of information from the knowledge base with the user request, we used the relevance score, which indicated the similarity between their content. Everything below a certain level of a predefined threshold was automatically filtered out and was not sent to GPT API. That way, we were able to improve the accuracy of the answers and reduce the cost of processing a request.

Basing architecture on LangChain

The framework we used to connect all the elements (GPT, Pinecone vector database, search, etc.) was LangChain. This simplified the architecture and allowed for a faster implementation.

Results

Shortening the bot’s time of response by 95%

Thanks to implemented solutions and optimizations, we managed to decrease the bot’s response time in the mobile app from ~40 seconds to ~2 seconds. This significantly improves the usability of the solution to the end users.

Delivering an MVP ready to be tested with users

One of the goals at this stage of the project was to validate the assumptions and test the idea of introducing an AI-powered assistant to the app. The MVP that we helped to deliver enabled successful tests with users and further product development.