How to prepare for AI implementation

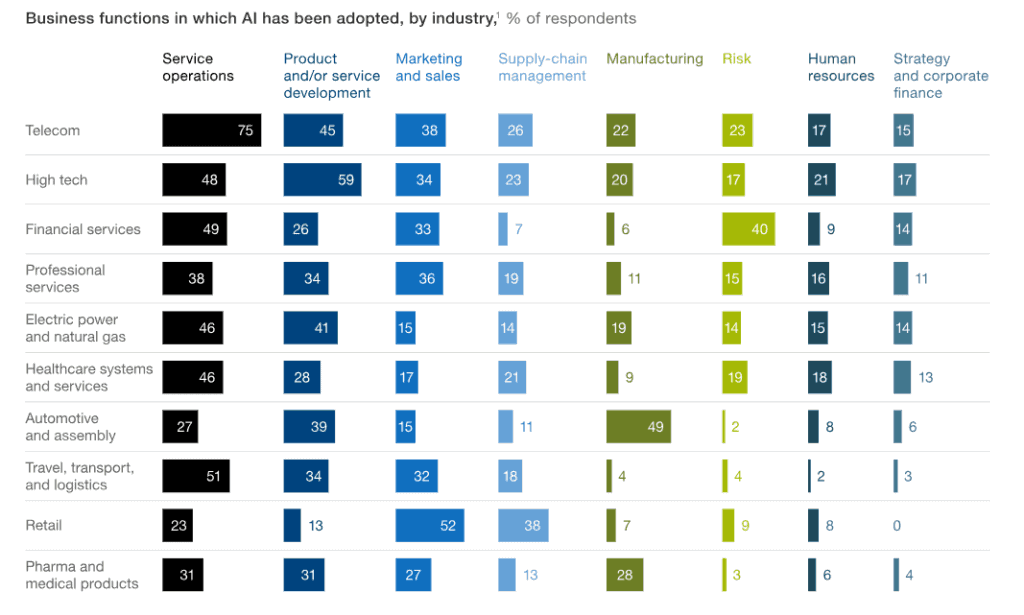

The business world is beginning to adopt AI. Research shows that 47% of organizations have implemented it in at least one function in their business processes, compared to 20% in the previous year. Another 30% of respondents say they are piloting AI. The main sectors to have adopted AI are telecom, high-tech, and financial services companies. There’s a variety of use cases of AI for these sectors but the results show that companies generally follow the money when deploying AI, choosing the most relevant areas of their business.

source: McKinseyHowever, with the entire craze surrounding AI, billboards advertising smartphones utilizing AI, Siri and Alexa becoming our everyday companions, and recommendation engines knowing our tastes better than we ourselves do, some businesses jump on the bandwagon out of the wrong reasons. Sure, AI deserves all this craze but adopting it just for the sake of being able to say “we use AI” doesn’t make much sense. Following the money does.

When you make your first steps with artificial intelligence, it may be difficult to imagine what the entire process looks like and how to implement AI in your business. What do you need to know? How do you get prepared? Do you need a new team of data scientists? What are the risks? I’ll try to give you answers to some of the most common questions.

Why should you invest in AI?

The answer is simple: it’s profitable. A paper published by McKinsey suggests that three ML techniques, feedforward neural network, convolutional neural network, and recurrent neural network — together could enable the creation of between $3.5 trillion and $5.8 trillion in value each year in nine business functions in 19 countries. This is the equivalent of 1 to 9 percent of 2016 sector revenue. What’s more, PwC research shows global GDP could be up to 14% higher in 2030 as a result of AI – the equivalent of an additional $15.7 trillion – making it the biggest commercial opportunity in today’s fast-changing economy.

AI is said to follow a typical S-curve pattern, starting off slowly but with rapid acceleration as the technology matures and companies learn how to leverage it.

As of now, AI is still a lot about innovation, not business use cases. Sure, artificial intelligence is innovative, but again: you don’t want to have it just as a fancy addition to your business. You want it to work for you and bring profits. When creating an AI implementation strategy, you should keep in mind your company’s overall business strategy and utilize technology, such as AI, to follow the main business vision.

What are the main challenges of implementing AI?

How to adopt AI successfully? Read the comprehensive guidebook to AI.

Are you prepared to implement AI in your organization?

Getting started with Artificial Intelligence development services is not like setting up internet connection at the office, it’s still a more complicated process. There are some important factors you must consider when implementing AI. At the beginning of the process, make sure you’ve completed these steps:

1. Get familiarized with AI

You probably know what AI can do, so now see how it’s used in business. Observe other companies that have implemented AI successfully. More and more articles, reports, and books are published about a variety of AI use cases. Use them to see what AI solutions proved to be successful and which ones failed. Learn from the examples of other companies but don’t follow their lead blindly. Unless you’re the next Amazon, you most probably don’t need the same AI tech they use. The more you know about AI’s possibilities, the better you understand its potential role in your organization.

2. Find out how your company feels about AI

How do your coworkers feel about adopting artificial intelligence? Are all the decision-makers on the same page? How does the tech team feel about this idea? Have you already adopted AI in any capacity? Talk to your team, see what they think, and learn how much your business is open to this experiment.

As described by Bernard Marr, the author of “Artificial Intelligence in Practice”, most barriers to successful AI adoption are human-related. There are cultural barriers such as resistance to change, fear of the unknown – or the even stronger feel of failure, shortage of talent, and the lack of strategic approach towards AI adoption. In general, it’s fair to say that a lot of people still don’t have a full understanding of artificial intelligence and its value, and that may cause them to second-guess AI implementation or even ignore the topic overall as it seems too difficult to start. It’s not that hard, though, provided you do it step by step.

3. Identify the problem(s) you want AI to solve

AI is a great augmentation to human work and, if applied correctly, will optimize processes in order to help you achieve set objectives. First, you need to identify the area that it can improve. The AI use case should address a specific pain, be it an internal one within your organization or one of your customers. At this early stage, it’s good to focus on the things that AI has already mastered, such as prediction, automation or classification. Now, think about a part of your business that could use that. You can predict e.g. customer behaviors and preferences, demand for products, prices of resources.

As stated in the 2017 “Where you should use AI and why” Gartner report:

Rather than their experimental or “cool” value, AI projects and pilots should earn their priority based on the needs of organizations considering them.

This is actually something you should keep in mind when considering AI. AI augments your team, supports your business, and, ultimately, helps you achieve your general business goals. That’s how it should be.

4. Get your data ready

You can’t think about AI without thinking about your data. Data is an essential part of AI. It cannot (and will not) give you any predictions if it’s not fed with large volumes of relevant data. If you don’t know what types of data your company collects – check it. It can be information collected through your service (e.g. customers’ purchase history, demographic data, on-site interactions, etc.), excel data files (xls or csv) containing information about your recent sales, services, and orders, or any other data, including that from your CRM, ad campaigns, email lists, traffic analysis, social media or even public information, e.g. about your competitors or the prices of resources. Before you start working with AI, you need to know what kind of data you’re dealing with.

Here’s a short checklist on the things you should verify when it comes to data:

- Check the type of data that you have – is it structured or unstructured?

- Specify what data has been collected about the users: demographic, purchase history, on-site interactions, helpdesk contact, other.

- Identify how to find high-quality data (what you have may not be enough now).

- Categorize data by adding metadata, tags, etc. Or is the data already categorized?

- Focus on the right data – don’t just collect all the information there is, collect and access the data that is the most important to you.

If you’re experiencing problems with any of these points, don’t worry. When you start working with a data science team, they will guide you through the process and help you make sense of the data you already have.

5. Start small and test your assumptions

Implementing a brand new company-wide AI strategy takes time, effort, and money. Instead, choose one segment to test AI in and start with a proof-of-concept to validate the idea. This implies a much smaller risk of failure as it’s easier to build an efficient system piece by piece and it leaves room for improvement in each iteration. At the same time, you can quickly see what AI brings: what model parameters were achieved, what the results provided by the predictive model are and how they can be interpreted. This helps you better plan out further AI implementation and, if still needed, wins you arguments to convince your executives or coworkers that it’s worth to invest in AI now.

So how do you start small with AI?

First, as specified above, you need to create a data inventory. You now know exactly what data you’ve collected.

Then, you identify processes you want to optimize and try to find a match. The “match” means that you should correlate the data you’ve collected with your business goals and challenges. When you look at both the business needs and the data, you’ll most likely see the best starting point.

You should also define the success criteria – know what you want to achieve with this project. Don’t forget to measure the results to see how they compare with your assumptions.

Potential problems in AI adoption

Though artificial intelligence can be used for any company’s benefit, there are also some issues that you should consider. Here are some of the potential problems in AI adoption that should be addressed.

Data quality and quantity

The model is only as good as the data that feeds it. Artificial intelligence works best when given large amounts of high-quality data. AI systems learn from available information much as people do, but in order to learn about patterns, they need much more information to recognize features or understand concepts.

What’s more, you need to make the data right. If you only use publicly available information, chances are your competitors use the same information, so it won’t give you an advantage. In some industries, it’s also possible that sufficient information isn’t available. But if you do have the data to train a model, you really have to make sure it’s high-quality. The data sets must be representative and balanced or the system will adopt bias from these data sets.

Legal issues

The legal system fails to keep up with modern-day technology and with the appropriate laws not in place, artificial intelligence may sometimes be difficult to manage. One of the most common concerns is the liability in case of an AI system causing damage. Who’s responsible then? Clearly, it’s the system’s fault, but we need a human to take responsibility for it. If an autonomous vehicle causes an accident, who do we blame? The person who ordered making it? The developer who programmed it? There are no answers now.

While AI-caused accidents may not be worrisome to some business owners who don’t deploy AI to an extent allowing for such incidents, there’s a more down to earth matter: GDPR. Before GDPR, tech giants such as Google or Facebook collected enormous amounts of data every day. That’s great for AI systems that have up-to-date information served to them all the time. But with GDPR, data is treated not just like a big sack of otherwise useless pieces, it has become a commodity that has to be handled with care. Google and Facebook had to alter their data collection methods. As AI systems will not adapt themselves, it required some development work to alter the way data is collected.

Data collection in the era of GDPR is one thing but there’s another twist to it. Under a very strict understanding of the GDPR, the user is allowed to demand explanation on how their data is processed, so they may ask Netflix why they were given a particular recommendation. Which leads to the next point:

No explanation behind the decisions

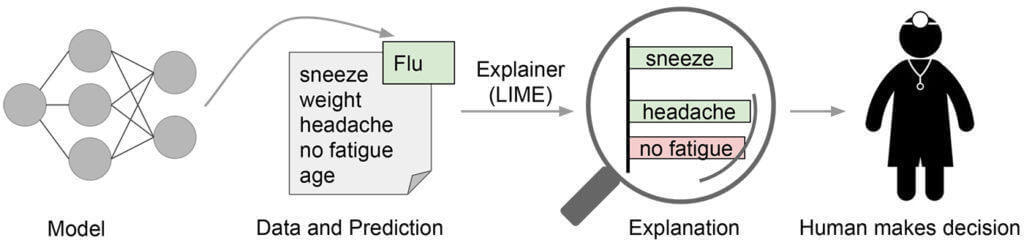

Many models are black boxes – they deliver predictions but without giving you insight into the processing, so you know the decision but you don’t know how it was made. Why did the system decide this? Well, it analyzed the provided data and got to this decision, but you won’t know any details. And if Netflix has to explain, in detail, how it got to the recommendation of this film, it may be a trouble. There are ways to track the process but even then: they’ve spent crazy amounts of money and time developing their secret recommendation system, and now, there you go, here are the details.

Right now it’s difficult to understand how decisions in multi-layer neural networks are made, it’s not linear maths, so justifying the predictions can also be difficult. However, there are some approaches that aim to increase model transparency, such as LIME – local interpretable model-agnostic explanations. If the system is asked to provide a prediction to help make a decision, sometimes the explanation is simply necessary. Let’s say a doctor is given predictions on what the patient is suffering from. The doctor cannot simply rely on a prediction that says “flu”. How is it flu? What are the factors that led to this diagnosis? If the users are given the rationale behind the decision, it will be easier for them to assess when to trust the model.

Moral and ethical issues

The list of debated topics concerning AI and ethics is quite extensive. There’s a number of issues people see, starting with the system being biased. There are cases when the system is biased towards a given group of people, like in the example of software that was used to predict crimes – and it was biased against black people.

As I’ve mentioned before: the system is only as good as the data it’s learning from. The algorithm groups data in a way that it learned from the data and does not verify the data’s correctness. So, if the data it’s given reflects racism or sexism, the system will follow that as a rule. You have to be extremely careful to make sure that your algorithm is fair.

Lack of technical knowledge

AI for business is an emerging field and the number of experts is limited. AI adoption requires the support of data scientists and other subject matter experts that may be difficult and expensive to hire. What’s more, you’re also not sure you’re getting the right people. Unless you’re an expert as well, you won’t know whether your new data scientist is good at their job or not.

Small and medium enterprises often don’t have enough budget to follow through with their AI project. With the scarcity of experts available and the high costs, it may be easier to find a vendor for your AI. What’s more, when you outsource the AI team, you can have a look at their portfolio to see what projects they’ve delivered. AI adoption is a time-consuming and expensive process, and having an external team mitigates the risk: you can start with a small part of the system (PoC) to see how the cooperation is going.

Next steps

When you’re aware of the promises and threats AI implementation presents, it’s easier to prepare for the process itself.

Even when you’re not sure about the right AI use case for your company, you can still explore the options. The truth is that AI will be gaining more and more popularity, so it’s good to understand it now when it’s still making its first steps into the business world. It will be much more difficult to learn and use AI to your advantage when everybody else has already deployed their systems.

With artificial intelligence, it’s not “go big or go home”, it’s a company-wide change that your organization and staff have to adjust to, so it’s reasonable to start small. You can use the experts’ help to crystallize the idea for AI in your business or help you choose the best model to support your operations and then get to the proof-of-concept before you develop the entire AI strategy for the future.

Artificial intelligence may seem difficult to comprehend but with the right people on board, you’ll see that it’s not that scary. And the benefits? Well, the examples of giant companies like Facebook, Google, Amazon, Netflix, Uber or even McDonalds should speak for themselves.